How not to waste an AI for Science mission

On the regrettable necessity of thinking carefully before spending public money

This post is co-authored with Laura Ryan, and originally appeared on the Better Science Project Substack. Read it in its original home here – and subscribe!

Missions – possible?

Everybody loves a good mission. The Manhattan Project compressed a decade of theoretical physics into three years of industrial-scale engineering and produced a device that ended a world war. Apollo took a president’s dare and, within the decade, landed two men on a body a quarter of a million miles away.

But the reason these examples are so oft-cited, more than half a century on, is that they’re rare. Most scientific progress doesn’t work this way, and we oughtn’t pretend it does. National missions are grand undertakings – they run on both ambition & architecture; the careful selection of goals matched with specific structures to deliver. They require the singular and deliberate concentration of effort, attention, talent, and a huge amount of resources on a well-defined objective; if everything’s a ‘mission’, nothing is.

The language of missions has been loose in British policy for some time now. Mariana Mazzucato popularised the concept amongst the wonks of Whitehall; the Conservative government’s Industrial Strategy adopted it; Labour now governs under five grand “missions” that straddle huge areas of domestic policy. The word has become somewhat promiscuous – it was only a matter of time before it reached DSIT.

The government’s AI for Science Strategy (published last November) does something refreshing in this space.1 Instead of paying lip service to mission-ology, it makes a concrete commitment to one flagship mission: a specific, time-bound, falsifiable target to develop trial-ready drugs within 100 days by 2030. It also commits to selecting a further round of missions in 2026.

We were each co-authors of the Tony Blair Institute’s AI for Science paper that fed into the strategy. That paper did not recommend missions. But with one mission announced and more on the way, the stakes are high enough to warrant a more thorough examination. History shows that well-executed missions can produce spectacular results; recent experience shows there are plenty of ways for government to fall short. We want to try to help narrow that gap as much as possible, and make the AI for Science missions a success.

First, principles

AI for science matters to Britain. We are fundamentally a scientific nation; a considerable share of our prosperity and national character is derived from this fact. Britain’s scientific inheritance is one of the few unambiguous advantages we carry into this century, and AI will reshape what that inheritance is worth.

Science is about to transform: radically and within a generation. Getting this transformation right is among the more consequential things the government can do. Few countries have more to gain – or more to lose – than we do.

Our greatest challenge is embedding AI across the whole scientific landscape: building enabling infrastructure, generating and sharing high-quality data, incentivising upgrades to the tools and workflows on which scientists depend. But alongside that broader effort, there’s space for something more concentrated: specific programmes that marshal resources and attention toward defined scientific goals.

In this spirit, the AI4S strategy commits to a handful of missions – but what does that mean?

There are many species of ‘mission’: scientific moonshots versus the creation of broad societal goods; government as principal versus government as conductor. Do they mean a demanding, resource-intensive push toward a technical objective, like sequencing the human genome? Or do they mean a broad, systemic goal, like using AI to tackle the reproducibility crisis? These are each fundamentally different propositions, with different selection criteria, different delivery models, and different measures of success.

As a baseline, we should outline what we think DSIT intends. Per the strategy, the AI for science missions are aiming for technical and scientific breakthroughs, and sit closer to a conductor model – government orchestrating activity across academia, industry, and public institutions, concentrating resources, and using its convening power and national assets.

This is helpful framing as far as it goes, but it doesn’t go very far. We have a rough idea of the kind of missions they’re aiming for, but not yet any detail about how they’ll be run. And it is in these details that missions succeed or fail.

The credibility and success of the missions programme depends entirely on two things: the quality of what gets selected, and the delivery architecture that surrounds it. This post covers the first of these.

The second half of the problem – implementation – matters just as much as selection. We shouldn’t take it for granted that delivery will be up to par. Anyone who’s worked inside the system knows that government’s default mode is to treat the announcement as the product. Keeping a mission going depends on sustaining a punishing standard of rigour, and once political attention moves on, it can be difficult for senior civil servants to justify spending significant ongoing resources on yesterday’s news. Indeed, delivering missions well may require entirely new institutional architecture – both inside and outside government. We will return to this at a later date.

The good, the bad, and the ugly

Now that missions have been announced, proposals are sure to come thick and fast. Researchers, industry lobbyists, and think-tank operatives will rush to the new seam to pan for gold. Some ideas will be strong. Some less so: there is little cost to throwing ideas out there, and the policy ecosystem is not always known for its quality control. The reputational upside of having your domain selected is considerable; even unsuccessful bids tend to gain disproportionate airtime, while proponents seldom bear the delivery risk.

If you squint, just about anything can look like a “mission”, and we should be wary of uncritically memeing misguided ideas into the mainstream. More important than rushing to stake claims on specific domains is to establish what kinds of proposals are genuinely well-suited to be national missions; if the government is to select well, it needs to know what it’s selecting for.

The strategy selects five broad areas of science and technology where AI could unlock progress: engineering biology, fusion energy, materials science, medical research, and quantum technologies. Most of the strategy’s efforts will be directed at these domains – including missions.

Each area was selected on the grounds that it shows existing UK strength, alignment with wider UK strategy, and opportunities for AI-driven progress. It would be hard to disagree that any of the five clears that bar. But each is a hefty field. A huge variety of work within these areas will be important and deserving of funding and support; few problems are specifically national mission-shaped. The bar for domain selection was deliberately low: the bar for mission selection must be considerably higher.

To raise that bar, we need to know what we’re aiming for – to reverse-engineer what’s mission-shaped from an understanding of what national missions are, and how they work.

There’s good prior thinking on this within government that deserves to be dusted off. In 2018, the Council for Science and Technology – then chaired by Patrick Vallance, now Science Minister – published advice on how to run a successful mission programme. In their view:

“Missions should be ambitious, transformative programmes that address grand challenges. They must have a clear vision, be problem-led without pre-defined solutions, and have a quantifiable outcome. They should not consist of existing Government policy re-branded.”

They go on to say that successful missions require (and are characterised by) a fundamentally different approach to management and delivery: a single accountable leader, a dedicated cross-government team of sufficient critical mass, ring-fenced resources, simplified governance, and access to external expertise.

These elements are a good jumping off point for thinking about what makes a strong mission candidate, but they are generalised to any mission programme. What follows is our attempt to build on them: a set of criteria, some analytical, others practical, that we think should govern the selection of AI for science missions. It is not exhaustive, but it covers the ground where we think the risks of getting it wrong are highest.

1) Definitional clarity

What is AI for science?

“AI for science” is almost as promiscuous a term as mission. Does it mean science that makes use of machine learning? AI applied to the research ecosystem? Does it include research into artificial intelligence? And does it matter?

The government says the missions should aim for goals “that can only be reached through breakthrough scientific progress enabled by AI” and that missions may occur in priority areas where AI is “poised to play a significant role in accelerating progress.”

This is sentimentally a good starting point – we like breakthroughs. But as the programme gets underway and selection approaches, government should articulate a considerably more specific definition. Almost every field and type of science now uses AI in some form – as a workhorse for data analysis, as an aid to simulation, or as an accelerant to processes that were previously manual and slow. Most serious new research programmes launched today will have AI woven in as a matter of course. If the test is simply whether a mission involves science and is significantly enabled by AI somewhere along the way, then virtually anything qualifies.

We think the threshold must be more demanding: an AI for science mission should be one where AI is not merely present but constitutive – where the goal is unachievable without specific novel AI capabilities, or where the mission itself must build the foundational assets on which those capabilities depend. This is a harder test than “science enabled by AI,” but it is the only one that gives the label real discriminating power.

The mission programme may buckle if swathes of proposals can claim the “AI4S” label with equal plausibility. It must be able to distinguish genuinely exciting opportunities for value-creation from rebranded scientific programmes that would have been proposed regardless.

2) Quality over quantity

Can we afford another?

Good national missions are expensive. The Vaccine Taskforce agreed a £5.2 billion programme business case with the Treasury. The London 2012 Olympics cost north of £9 billion. AI for science missions will not build stadiums or procure vaccines at breakneck speed during a pandemic; but the resources required to stand up anything deserving of the name are nevertheless substantial.

The government has committed up to £137 million to the entire AI for Science Strategy. That figure covers a lot: data infrastructure, fellowships, doctoral training, interdisciplinary teams, and missions. Not all of it – probably not even most of it – will go to national missions. The strategy also doesn’t say how many missions it intends to select: it commits to “a handful”, so probably more than two, fewer than ten. In the most restrained possible case, we are talking about an absolute maximum of low tens of millions each.

In scientific programmes, this does not get you very far. Crowding in private or philanthropic funding then – as the government has said it wants to do – becomes a condition of viability. But it is also in a sense definitional: missions require others to have skin in the game, so the selection process should favour candidates where such co-investment is not just possible but natural.

Each new mission is also competing for the same inputs: leadership talent with both scientific credibility and delivery capability, compute allocation (the strategy’s own “system takeover” model ties up 80% of national capacity for two weeks per mission), and sustained political attention. These are extraordinarily difficult to parallelise.

The government has said that part of the reason it is pursuing missions is to ensure resources “are targeted towards shared goals instead of being spread thinly across diffuse opportunities.” That’s the right instinct, but the pressure to add missions will be considerable. Announcing the next one is virtually costless and would confer considerable political upside in the short-term (and the goodwill of the research base). Many ideas will be advocated for with great force. Resisting that pressure will be the government’s first and most important act of discipline.

3) Meaningful, falsifiable targets

Can describe success precisely enough to know if we got there?

The most basic question a mission candidate must answer is also the one most easily fudged: what, precisely, would it mean to succeed? A mission needs a target that’s concrete, specific, and tied to a deadline: we will cross this threshold by this date. “Trial-ready drugs within 100 days by 2030” is a target that government can succeed or fail at, which makes it stick out from the usual mush of “strengthen the UK’s position” or “unleash innovation.” A target that cannot be clearly met or missed is moot.

The target must also be set at the right altitude. Too modest, and it fails to justify the machinery of a national mission. Too unrealistic and no one will take it seriously. The target should sit in the narrow band where achievement is genuinely uncertain but plausibly within reach – stretching enough to demand extraordinary effort, credible enough that failure reflects on the programme rather than our level of ambition.

A target must also be compatible with the delivery model it depends on. If meeting the goal would require fundamentally re-orienting ongoing activities across research communities, industry, or other areas of government, then either the mission team needs the mandate and resources to drive that reorientation, or the goal needs to bend to accommodate reality. A target that assumes a level of systemic coordination the delivery architecture cannot provide is a fiction.

As any scientist will tell you, perhaps the most critical consideration when setting a goal or readout metric is correct causal attribution. You need to be confident that your mission was the cause of (or at least a significant contributing factor to) the outcomes you hope to observe. This rules out a lot. A target pegged to macro-level indicators like total factor productivity, R&D intensity, or private investment in research would fail immediately: these indicators are driven by such a wide range of forces that no single programme could honestly claim ownership of the result.

Equally, a target pegged to broad research assessment processes would fail. The REF, for instance, is shaped by so many variables that no intervention could credibly take credit – and its cycles are far too slow and too infrequent to function as a meaningful feedback mechanism in the first place. Good mission targets need verifiable causal chains between the intervention and the result. This also means that, wherever possible, progress should be independently verifiable – not self-assessed by the mission team or the department that sponsors it.

And finally, a really serious mission must be capable of embarrassing its sponsors. To know when you have succeeded, you have to know when you’ve failed – and that failure must be visible enough to sting. The London 2012 Olympics could not be redefined as a partial success if the stadium was unfinished on opening night: the date was fixed and the world was watching. Pressure is the mechanism by which missions sustain political weight; visibility keeps everyone honest.

4) Multi-faceted challenges…

Is the problem the right shape for a mission?

Scientific research can come in all shapes and sizes. Some efforts are hell-bent on chasing a single quarry; some are sequential, linear pipelines; some traverse vast and directionless oceans of understanding. AI can likely play a role in all of these. What matters for mission suitability is the shape of the problem.

At one end of the spectrum, AI is already accelerating well-contained processes across dozens of fields – improving image classification in pathology, say, or optimising the geometry of a turbine blade. These are discrete technical challenges where AI is applied directly to a known bottleneck, without requiring the government-anchored orchestration of multiple actors. Some of these efforts are enormous in their ambition and impact, but the bottleneck is capability, not coordination, and they don’t need the machinery of a national mission.

At the other end sit the great diffuse frontiers: broad research spaces that would benefit greatly from widespread AI adoption, but without a defined north star – no singular goal that requires a grand coordination of effort. Open-ended exploration across multiple fronts lacks the bounded structure a mission demands, with no clear map of pathways that can be shortened. ‘Understanding the mechanisms of neurodegeneration’, for instance, is a functionally edgeless space containing countless local bottlenecks that AI might help relieve. But the science advances on a broad front; you can’t set a deadline for a problem with no vision of what a ‘solution’ looks like. A mission is not a general-purpose strategy; it’s a siege engine – and it needs a wall to batter against.2

The sweet spot lies between these poles. The right candidate for a mission should aim for a scientific or technical goal that was previously out of reach, requiring a multi-stage process and coordination between disparate actors who won’t align on their own – a network of distinct challenges. Crucially, there must be a credible account of why an AI-oriented mission can shift the dial.

5) ...that need government intervention

Does government play a necessary role here?

There should also be an understanding of why government involvement is necessary, and what a national mission can offer that can’t be achieved otherwise. The strategy talks about missions “galvanising activity and coordination across UK industry and academia”. This is a natural place for government to be, and aligns with the sweet spot described above. But government can only provide so much funding and bandwidth, so it should be very strict about discriminating on the basis of additionality.

That said, additionality should be assessed against the full range of what government can uniquely provide: access to, or redeployment of, existing national assets – including public facilities or secure datasets; coordination and brokerage power that can anchor the disparate efforts of multiple actors inside and outside government; and help with addressing policy or regulatory blockers.

We may think more about some of these concepts in a future delivery-focussed piece. For now, it’s sufficient to say that if the private sector or public institutions could get there with a push in the right direction (or with barriers removed), it’s probably not a good candidate.

Let’s apply these last two tests to the drug discovery mission. On the shape of the research problem: the preclinical pipeline – target discovery, hit identification, binding affinity prediction, lead optimisation, ADMET modelling, and preclinical testing – is sequential, well-understood, and (critically) slow for reasons that AI seems specifically suited to address. A mission approach is sensible because there are multiple specific stages where specific AI capabilities produce specific acceleration.

But the problem isn’t merely scientific – if it were, then the research base and pharmaceutical industry would get there on their own. Critical enablers require targeted action from government: offering usage of national facilities, establishing new public infrastructure like the Health Data Research Service, and reforming regulation to enable the Medicines and Healthcare products Regulatory Agency to accept AI-generated evidence. These are public goods or pre-commercial activities that the market won’t supply, and collective action problems that no one company or institution has the incentive to address.

6) Consideration of existing national assets

Are we playing to our strengths?

If you want to play missions on easy mode, you don’t begin from a standing start – with a budget this modest you can’t afford to. Far better to leverage what already exists to give the mission every possible advantage. What counts as “enough” will vary by domain, but the question for any candidate is simple: does the UK already possess the assets needed to deliver, or are we planning to build them as we go?

For drug discovery, the existing asset base is comprehensive. The UK has considerable infrastructural strength and depth, across research talent, high-quality data, and world-leading institutions and infrastructure. But there is also an established commercial and regulatory ecosystem beyond the lab ready to translate AI-derived treatments into products and improved patient outcomes. Not every mission will need this kind of end-to-end readiness, but existing assets mean the mission can focus its limited resources on the genuinely hard problems. Whatever the relevant assets are for delivery in a given programme, the mission is much more likely to succeed if they’re already in place.

But ‘existing assets’ requires more granular thinking than simply listing major institutions. An asset could equally be a specific capability – such as a recent breakthrough in experimental technique – that an AI mission can exploit. For example, recently, a breakthrough at the Diamond Light Source – a development in ultra-high throughput crystallography – has enabled researchers to generate structural data at orders of magnitude greater scale than was previously possible. This is the kind of step-change that can make an AI mission newly viable, but it required deep domain awareness (and external expertise) to recognise its potential for the drug discovery mission. Learning from this, DSIT should undertake a detailed capability scan, mapping breakthrough experimental technologies that could generate the foundations on which AI-driven science depends.3 If you don’t know what you have, you can’t play to your strengths.

There is a trade-off here. In some cases it will be acceptable – desirable, even – for a mission to build on a more nascent ecosystem rather than exploiting existing institutional strengths. This might be justified where strategic necessity demands it, or where an emerging field shows extraordinary promise but lacks the critical mass to develop without a deliberate concentration of effort. Missions can be a powerful vehicle for fanning early sparks into something larger. But the less you have in place at the outset, the more honest you’ve got to be about what that means for deliverability and timelines. A mission that must first construct its own foundations before it can pursue its core objective is a fundamentally riskier proposition – and the timescales involved must be compatible with the urgency that justifies a mission approach in the first place.

7) Mission Control

Is it governable at the right level?

Missions succeed or fail on the strength of decisions taken by the right people, at the right level, at the right time. Every Manhattan project needs its General Groves, and the enabling operating system around him that turns individual decisions into coordinated delivery at scale. The science is critical, but what makes a mission a mission is the deliberate concentration of authority, resources, and strategic vision under a defined line of command, oriented towards delivery.

Government departments typically aren’t accustomed to operating this way. The AI for Science strategy contains a long list of activities that appear to support the drug discovery mission, but as yet there isn’t much detail on how this or future missions will be governed.

Everything turns on the answer: it’s the difference between simply defining targets and outlining commitments – words on pages – versus something that can actually be done. Will these “missions” essentially be funds attached to loose collections of already ongoing complementary activities, operating under a shared umbrella heading? Will they be governed through the familiar machinery of “deliverology”, where plans are set at the outset, milestones tracked, and deviations flagged? Or will mission leaders be given genuine authority to prosecute the problem as they see fit – the power to marshal and redeploy resources, and the freedom to pivot?

We think a true mission must conform with the third model, though we find it hard to pinpoint many examples from recent history where the government has done this successfully.4 If this were what DSIT is aiming for in their AI for science missions (and we think it should be), we would propose the following as non-negotiables.

A mission needs legible authority: a responsible owner with a strong mandate and a direct line to ministers. It needs clear delegated decision-making, including on spending; excellent situational awareness of who is doing what, including outside government, and an understanding of where external actors must be drawn in. It needs a control hub – an organising mind capable of seeing the whole board and directing resources accordingly. It needs the right expertise deployed in the right roles. And it needs enough spending power to get the job done.

This isn’t a novel prescription. Vallance’s 2018 mission principles call for essentially this: a single accountable leader, a dedicated cross-Whitehall team of around eight to ten people, ring-fenced funding,5 and simplified governance. If Vallance still wants to follow these principles (and we think he should) then he should appoint mission leads with the requisite technical expertise and empower them accordingly.

These principles also give us a practical test for whether a proposal is genuinely mission-shaped. If you can’t imagine a single person being held accountable for whether the target was met, the mission is probably too diffuse. If the delivery team would need to span so many domains that no group of ten people could cover it and act as the strategic brain, it is probably too broad. The drug discovery mission, whatever its eventual governance arrangements, could plausibly be run this way. That won’t be true of every proposal.

8) Political durability

Can the mission survive political changes?

A mission that’s analytically perfect but politically fragile is a bad mission, because it will be defunded or hollowed out long before it delivers. Missions require sustained political commitment across spending reviews and ministerial reshuffles – a mission that can’t sell itself to a future minister who wasn’t involved in its creation is living on borrowed time.

There are several ways to build this resilience. The first is structural: embedding the mission within the ecosystem – weaving it into the fabric of multiple strategies such that it becomes difficult to unpick without disturbing something else, and is in the interests of many. The drug discovery mission is notable for how many existing government commitments it connects to. The Industrial Strategy, the Life Sciences Sector Plan, the Life Sciences Healthcare Goals, the strategy for replacing animals in research – all converge on the same set of objectives. This reflects the fact that drug discovery sits at a point of unusually high strategic density, where multiple policy goals are served simultaneously. International commitments and private sector co-investment can serve the same anchoring function: the more a mission is entangled with obligations that extend beyond a single department’s discretion, the harder it becomes to kill it.

The second is narrative. A mission that tells a good story and can be easily understood and defended has a structural advantage over one that’s more abstract or bland. Why does this matter to people’s lives? Why does it matter for Britain? A credible link to economic growth, public health, or national security is a load-bearing feature of the mission’s design.

The third is political constituency. Missions that create place-based effects generate local champions. Missions that attract cross-party support and ownership survive changes of government. Missions that connect to long-standing public priorities – health, security, cost of living – are electorally salient. None of this is a substitute for scientific merit, but scientific merit alone has never been sufficient.

Without an active champion (or team) invested in a mission’s survival, politics tends to be neglected entirely. Many propositions that could otherwise make plausible AI4S missions won’t naturally be politically sexy – and that can make them vulnerable to shifting political winds. The answer is not to ignore this reality, or to retreat into academia and hope that distance from the political battlefield confers safety. Though not necessarily mission-critical, any proposal should engage seriously with the political context it inhabits. The question for each candidate is stark: how many different reasons does the government have to care about this in five years’ time?

All to play for

There is plenty of reason to doubt that these missions will come off. The budget is modest, the institutional capacity to run missions properly is uncertain, the gravitational pull of the prevailing ways of working is intense. Missions are easy to announce, and brutally hard to deliver.

These tests are demanding because missions are demanding. It’s not lost on us that many of the most successful historical examples were driven by necessity: wartime pressures, the Space Race, the COVID-19 pandemic. These missions operated on a fundamentally different scale, but it would be naive not to recognise that maintaining critical mass around a mission may be impossible without the crushing pressure of a national crisis.

And failure may be not neutral, but negative. £137 million is a modest sum for national missions, but it could go a considerable distance in driving AI adoption across the research base. The opportunity cost of squandering it on a programme that collapses into business as usual would be a serious setback for AI for science – and for the credibility of ambitious government action more broadly.

And yet. It would be bloody brilliant if a mission worked. If the government can hold its nerve – apply rigorous selection criteria, resist the incentive to dilute, build up the organisational muscle, and refuse to let the programme degrade into a collective delusion – there is a chance. Britain needs to be able to do this.

AI for science is a great arena in which to recover the habit of national ambition, even if the budget suggests a country tiptoeing where it ought to stride. A well-executed mission programme would count twice over: once for the scientific progress, and again as a demonstration that the British state can still marshal resources towards a formidable goal and see it through. With sufficient will from the right people, we’ll see.

Our thanks to Sam Currie, Alex Chalmers, Alvin Djajadikerta, Rory Byrne, and Charlie Harris.

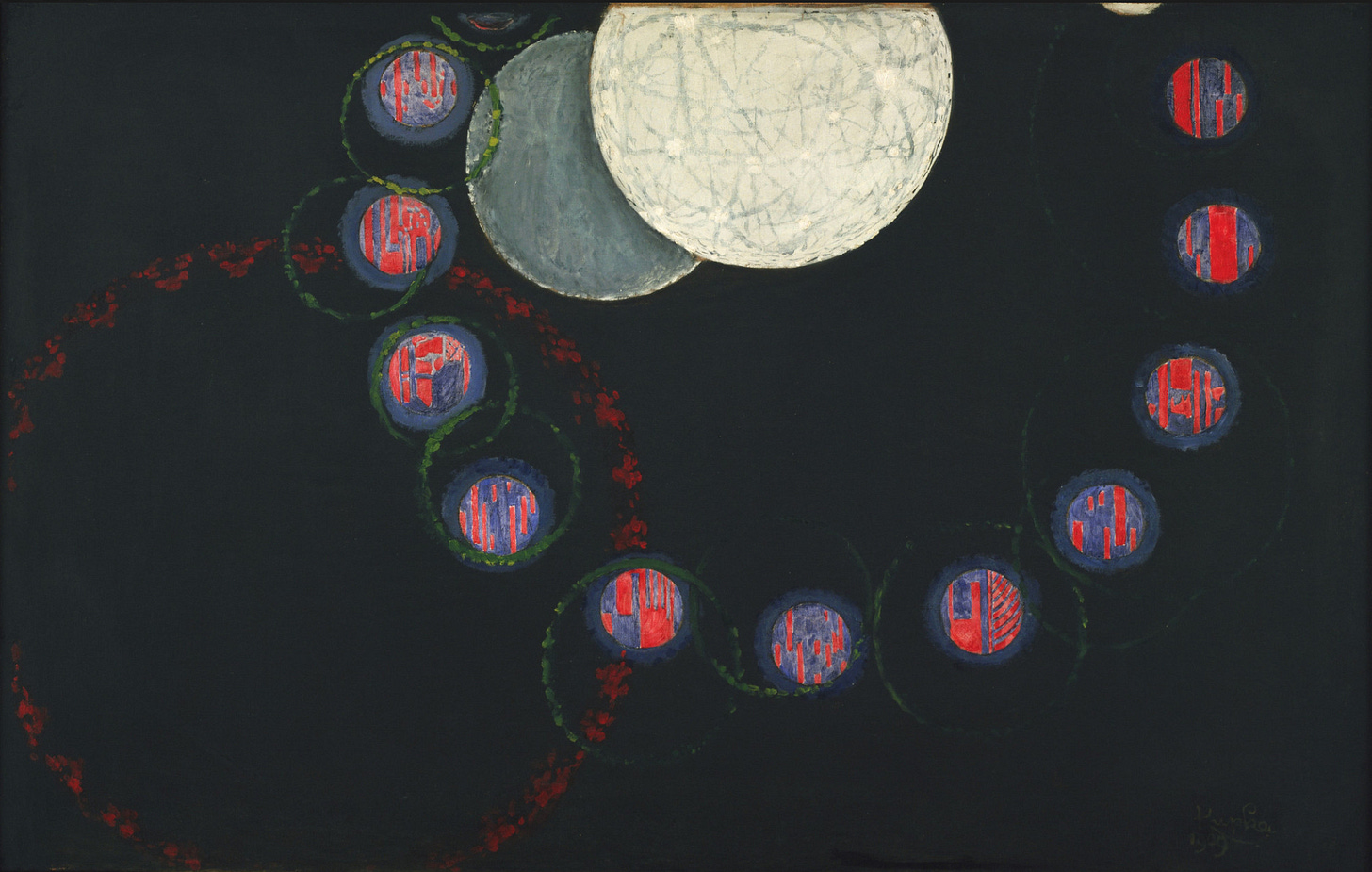

Cover image: The First Step, by František Kupka, 1910-13

Recently there has been an uptick in strategies making such commitments – e.g. the national quantum strategy, “By 2035, there will be accessible, UK-based quantum computers capable of running 1 trillion operations and supporting applications that provide benefits well in excess of classical supercomputers across key sectors of the economy”. This makes a discussion around the architecture of mission selection and delivery all the more critical.

For instance, Richard Nixon's 1971 'War on Cancer' was well resourced, but ultimately could not fully succeed as it treated a diffuse scientific frontier as though it were a bounded problem.

These breakthroughs won’t always come from traditional academic institutions: the UK has a vibrant network of startups producing exactly this kind of stuff. DSIT should cast the net widely.

The obvious exception is the Vaccines Taskforce, which is so oft-cited to qualify as the exception that proves the rule.

The history of ring-fenced funding surviving contact with UKRI's broader spending pressures is not encouraging.

Great work! I'm helping to write a proposal for the Genesis mission (the US AI for science mission) and this is really helpful. Thanks!